The 89% Problem: How LLMs Are Resurrecting the "Dormant Majority" of Open Source

March 4, 2026

0 mins readAI coding assistants are quietly resurrecting millions of abandoned open source packages. For the last decade, developers relied on a simple heuristic for open source security: Prevalence \= Trust. If a package was downloaded millions of times a week (lodash, react, requests), we assumed it was "safe enough" because thousands of eyes were on it. If it was obscure, we approached with caution.

Human developers follow social signals of trust, such as popularity, maintenance activity, and community adoption, and this "Wisdom of the Crowds" model worked because human developers are fundamentally social. We stick to the "paved roads" built by our peers. Generative AI, however, is starting to break this model.

AI systems select packages based on statistical patterns in training data that span the entire history of the internet, including millions of abandoned projects and experimental repositories. LLMs are stochastic; they do not understand "popularity" or "maintenance health" in the way a human architect or engineer does. Rather, they understand statistical probability based on training data that spans the entire history of the internet, including the good, the bad, and the abandoned.

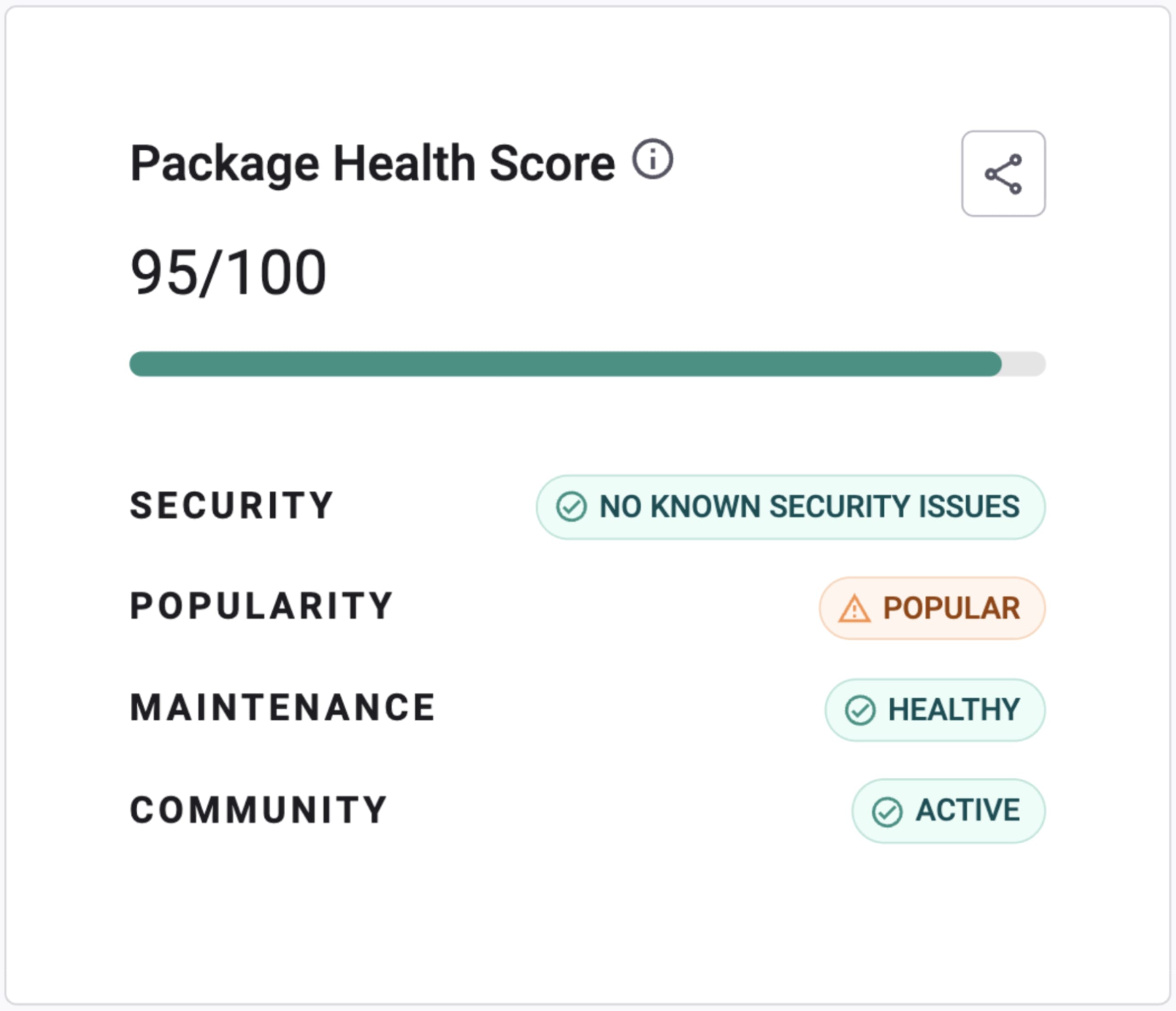

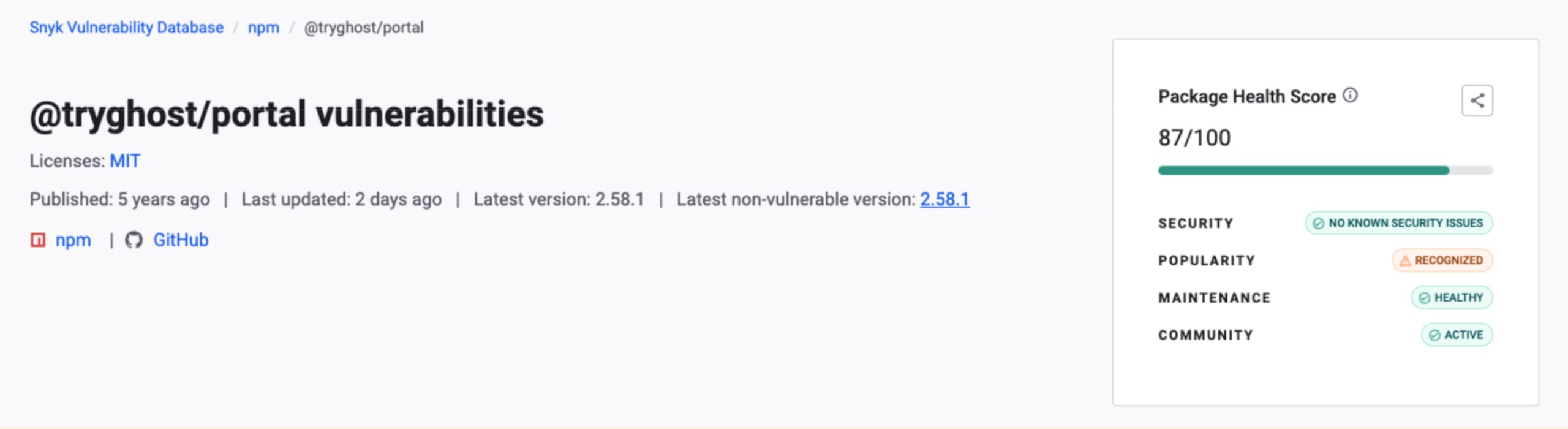

We built Snyk Advisor and then merged it into our Security DB to help bridge the gap between open source intelligence and package health, providing developers and agents with various data points on security, popularity, maintenance, and community. How does Snyk’s package health amplify AI agents? Here’s our take on it.

The data: Visualizing the "Dormant Majority"

When we analyze the open source ecosystem, a striking pattern emerges. A very small number of packages power most of the modern internet, while the vast majority are rarely used or have been completely abandoned.

To understand the risk, we need to revisit the structure of the open source ecosystem. Snyk contributed key data to the Linux Foundation & Harvard Census II Report, mapping the reality of the supply chain. When we overlay package health data on top of prevalence, a stark hierarchy emerges:

Usage Tier | Found in % of Projects | Population Size (Approx.) | Description | Package health on Snyk Advisor | Examples |

|---|---|---|---|---|---|

The Global Constants | 90% – 100% | \~1,000 packages | The "plumbing" of the internet. Deep transitive dependencies almost every modern app relies on. |

|

|

The Industry Standards | 15% – 50% | \~20,000 packages | The primary frameworks developers explicitly choose to build core architecture. |

|

|

The Domain Specialists | 1% – 5% | \~100,000 packages | Professional-grade tools for specific industries or complex technical niches. |

|

|

The Long-Tail Active | \< 0.1% | \~600,000+ packages | Valid, working code used in very specific scenarios or by a dedicated community. |

| |

The Dormant Majority | \~0% | \~6.3 Million+ packages | The 89.5%. Abandoned projects, "Hello World" tests, unmaintained forks, single-use experiments. |

|

Nearly 90% of the open source ecosystem belongs to the Dormant Majority – millions of abandoned experiments, forks, and unmaintained projects. Human developers rarely select packages from this tier; AI systems, however, do.

The AI disconnect

Human developers naturally stay in the top tiers of the ecosystem – widely used frameworks, trusted infrastructure libraries, and mature domain tools. Because LLMs are trained on vast repositories of code spanning the entire history of the internet, they may surface packages from anywhere in the ecosystem, including the Dormant Majority.

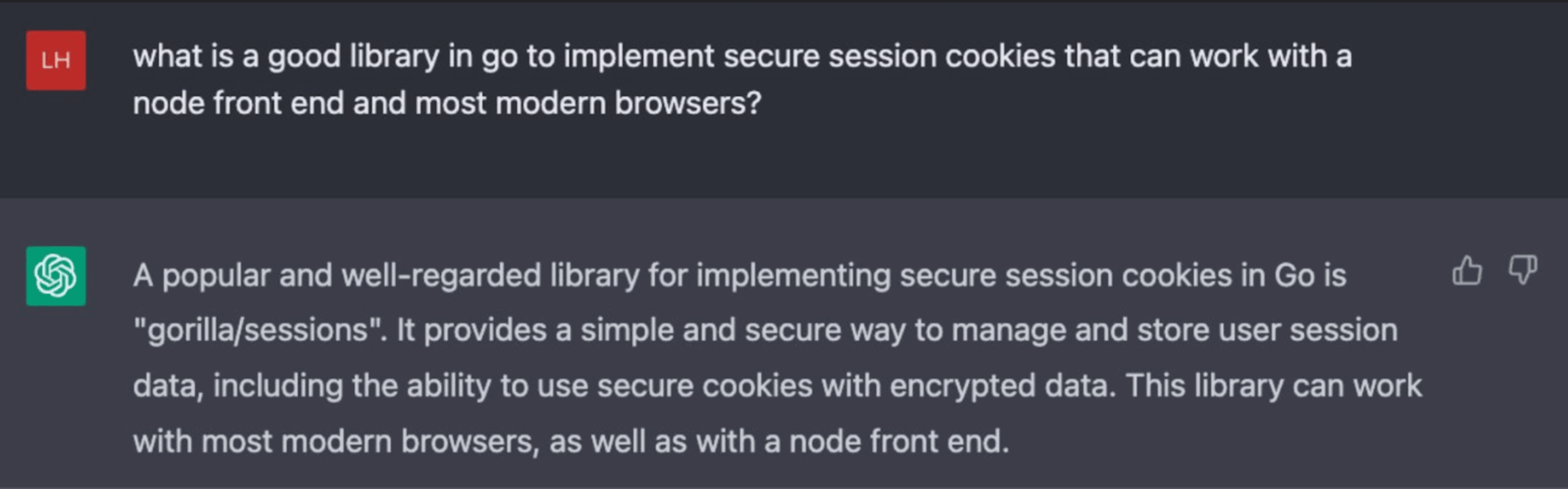

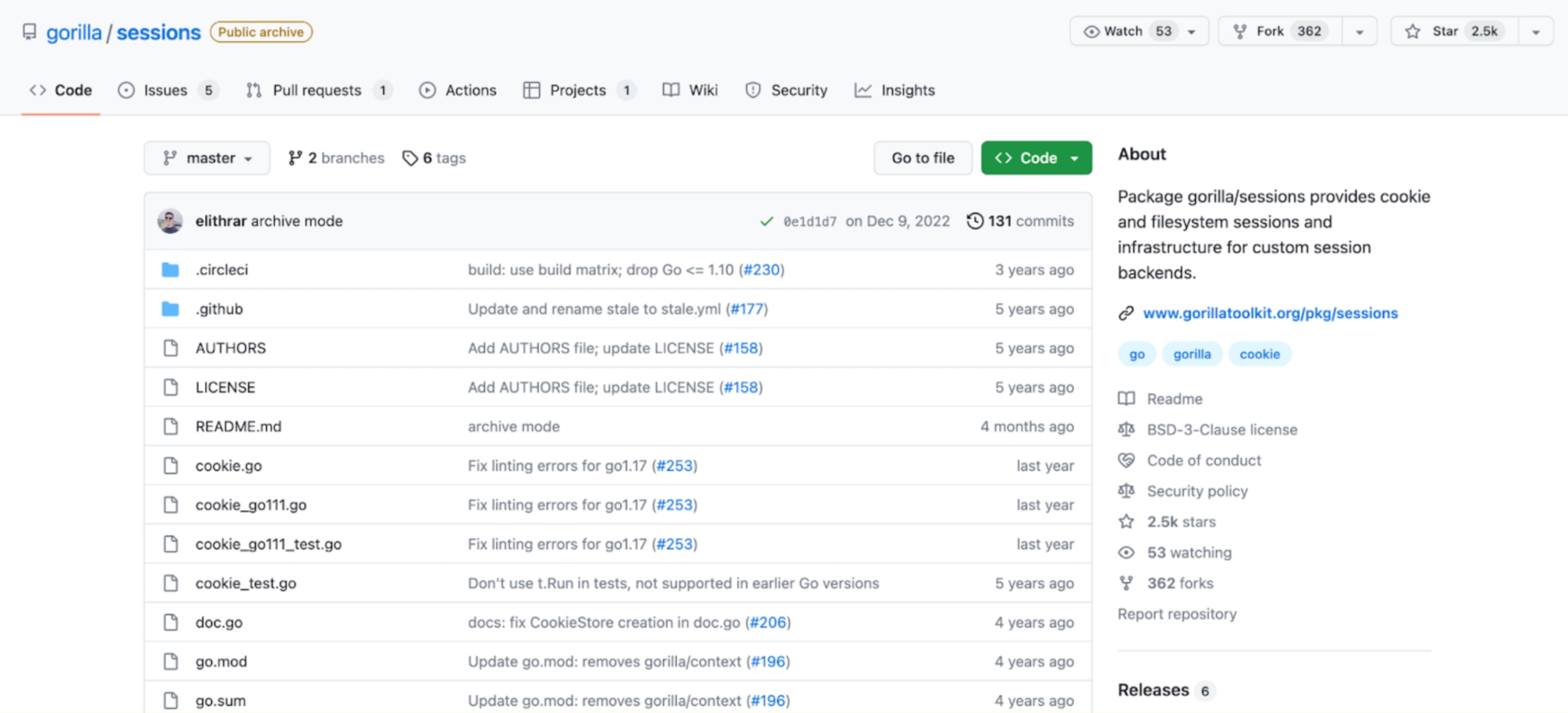

As a result, LLMs can recommend packages from the bottom 89.5% of the ecosystem: abandoned projects, unmaintained forks, and even simple “Hello World” experiments. For example, security researcher Luke Hinds shared an interaction where an LLM recommended the Go package gorilla/sessions:

The problem with this LLM recommendation is that gorilla/sessions has been archived. Because archived repositories no longer receive updates, using this package introduces long-term maintenance debt and unpatched supply chain risks.

Worse, LLMs suffer from hallucinations (or "AI Package Hallucinations"), confidently recommending packages that never existed. This creates two new attack vectors:

The zombie resurrection: An LLM suggests an unmaintained, 5-year-old package from the "Dormant Majority" because it solves a specific niche problem. It has 0 CVEs (because nobody looks for them) but contains critical flaws.

Slopsquatting (AI Hallucination Attacks): Attackers predict common package names that LLMs hallucinate (e.g., huggingface-cli-tool vs the real huggingface-cli) and register them with malicious payloads. When an AI suggests this "logical" but fake package, the developer installs malware.

The strategic pivot: From "popularity" to "provenance"

As a CISO, you cannot rely on your developers to manually vet every AI suggestion. The velocity is too high. You need to shift your program from "scanning for bad" to "ensuring good". In past write-ups, we’ve outlined the roles of CISOs and evolving responsibilities.

Similarly, as an engineer, you simply cannot rely on AI coding agents end-to-end to choose and install packages from npm or PyPI because of the inherent risk of the software supply chain that could result in a bad package that introduces malware or data harvesting, such as via npm’s postinstall package manager capabilities.

How do we equip AI coding agents and software engineers with package health heuristics to achieve more secure, autonomous results? With Snyk’s paved road.

1. Discover trusted packages with security.snyk.io

Before selecting a dependency, developers and AI systems need visibility into the reputation and trustworthiness of open source packages. The new Snyk Security Database experience provides a centralized view of package trust signals, helping teams quickly identify widely adopted, well-maintained, and reputable projects across supported ecosystems.

Strategy: Encourage developers and platform teams to use Snyk Security Database insights during package discovery to prioritize mature, trusted dependencies early in the selection process.

2. Snyk Package Health API helps verify health, not just vulnerabilities

A package with zero known vulnerabilities is not necessarily safe. It may be abandoned, unmaintained, or lacking a trusted community. Secure software supply chains require evaluating package health beyond CVEs. The Snyk Package Health API provides package-level and version-level intelligence across major ecosystems (npm, PyPI, Maven, NuGet, and Go), exposing signals such as:

Security posture.

Maintenance activity and lifecycle indicators.

Popularity and adoption metrics.

Community engagement signals.

This allows engineering platforms, CI/CD pipelines, and AI-driven development tools to automatically evaluate the quality, sustainability, and ecosystem risk of a dependency at the moment it is being considered - especially at the moment a dependency is being selected or introduced.

Strategy: Integrate package health intelligence directly into dependency-selection workflows and AI-assisted development environments, so package suitability can be evaluated before a dependency is added, not after it is installed.

Tooling: Use the Snyk Package Health API to inform package selection, upgrade planning, and automated dependency governance. See the Package level endpoint and the Package version level endpoint for implementation details.

If you integrate with an SCA via CLI, CI, or GitHub CI checks, then during and before a build, Snyk Open Source will also be catching these ill-recommended LLM coding suggestions (imagine the coding agent plans to run an npm install … for a malicious package).

3. Enforce dependency safety at introduction with Snyk Studio

Even when intelligence is available, developers and AI coding assistants may still introduce dependencies automatically. Security must so be enforced at the moment a dependency is selected, not only during CI/CD scans.

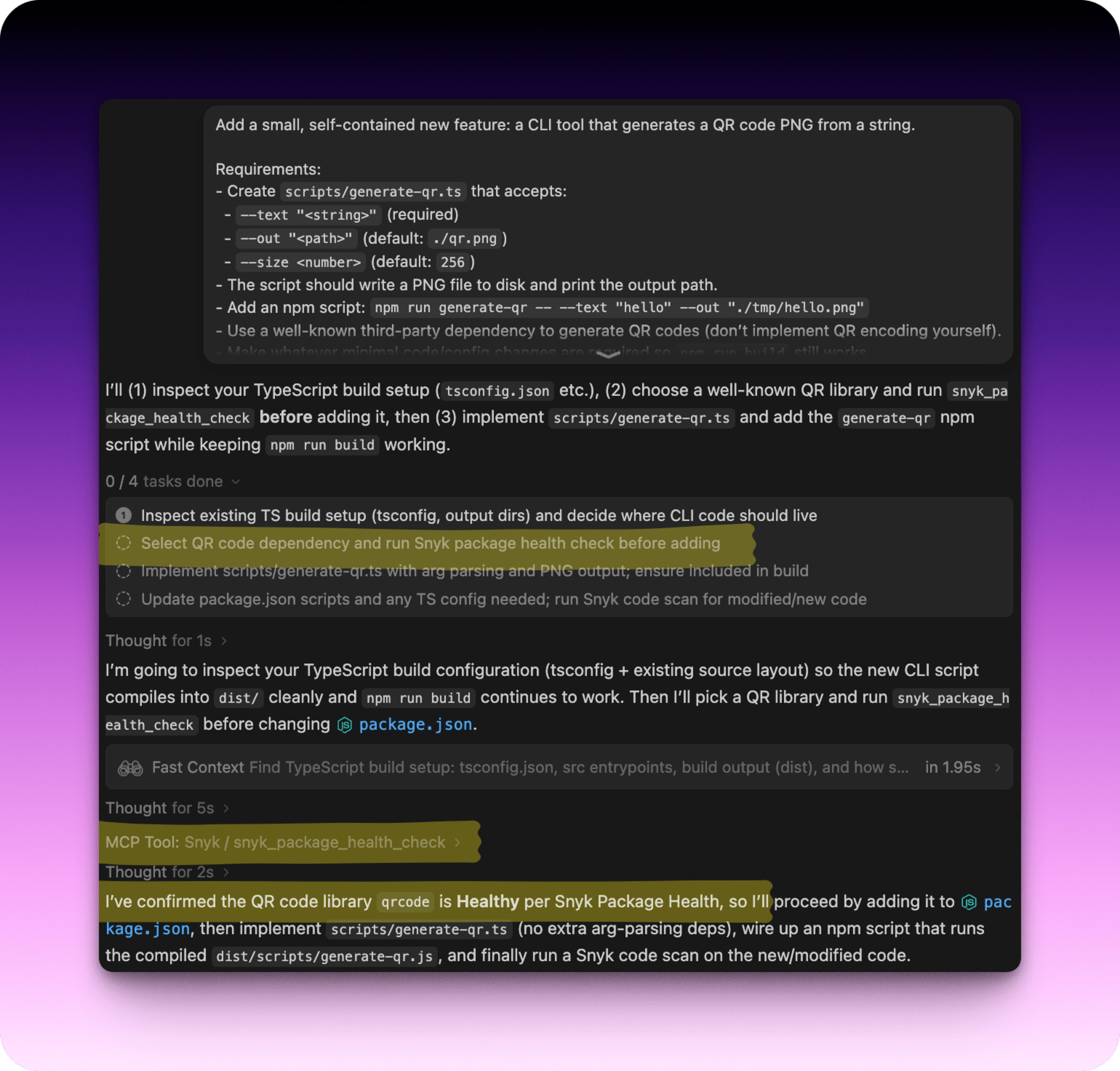

The Snyk Studio Package Health flow integrates package health intelligence directly into AI-assisted development workflows. When an AI coding assistant proposes adding or updating a dependency, Snyk Studio can automatically invoke the package health check before the dependency is installed, ensuring that risk signals are evaluated in real time. This allows organizations to prevent unhealthy, unmaintained, or risky packages from entering their codebase at the earliest possible stage - the “secure at inception” moment.

Strategy: Configure AI coding assistants to automatically run Package Health checks before introducing new dependencies, pausing or blocking installation when risk signals are present, and requiring explicit user approval to proceed.

4. Defend against hallucinations

AI coding assistants may occasionally recommend packages that do not exist in public registries. These “package not found” events should be treated as potential supply-chain security signals rather than simple developer errors, as attackers may later register packages with similar names to exploit these mistakes.

Strategy: Treat dependency-resolution failures (for example, “package not found”) as security-relevant events. Investigate the source of the dependency suggestion and validate whether the package name is legitimate before proceeding.

In the following image, you can see how Snyk Studio is invoked by the Windusrf coding agent to perform package health analysis via the snyk_package_health_check tool, which is part of other security tools in the Snyk MCP Server. With Snyk Studio installed, the AI agent can then confirm the package is maintainable, has no security issues, and is not malicious.

Snyk’s mission to secure AI-generated code

We built Snyk on the belief that developer-first security is the only way to scale. In the world of AI coding agents, this is doubly true. The Census II data shows us that the open source ecosystem is vast and mostly dormant.

Our job is to keep your AI and developers focused on the healthy, vibrant top tiers, the 10% that powers the world, and to automate defenses against the chaotic 90%. Don't let your AI verify trust. Verify the AI's trust.

Want to learn more about how AI coding assistants are reshaping software supply chain risk? Explore our guide on securing Python in the age of AI.

WHITEPAPER

The AI Security Crisis in Your Python Environment

As development velocity skyrockets, do you actually know what your AI environment can access?