From Discovery to Defense: Why AI Red Teaming Is the Next Step After AI-SPM

March 25, 2026

0 mins readThis week, we announced the general availability of Evo AI-SPM, the first operational layer of Snyk’s AI Security Fabric. AI-SPM gives security teams something they’ve never had before: a system of record for AI risk, with the ability to discover models, frameworks, datasets, and agent infrastructure embedded directly in code.

For many organizations, that discovery step is a breakthrough. It exposes the AI components hidden across repositories and developer environments and turns “Shadow AI” into something that can finally be governed.

But once teams see what AI they’re running, the next question comes immediately: How do we know these systems actually behave securely? Answering that question requires testing AI systems the same way attackers would.

How Evo Agent Red Teaming works

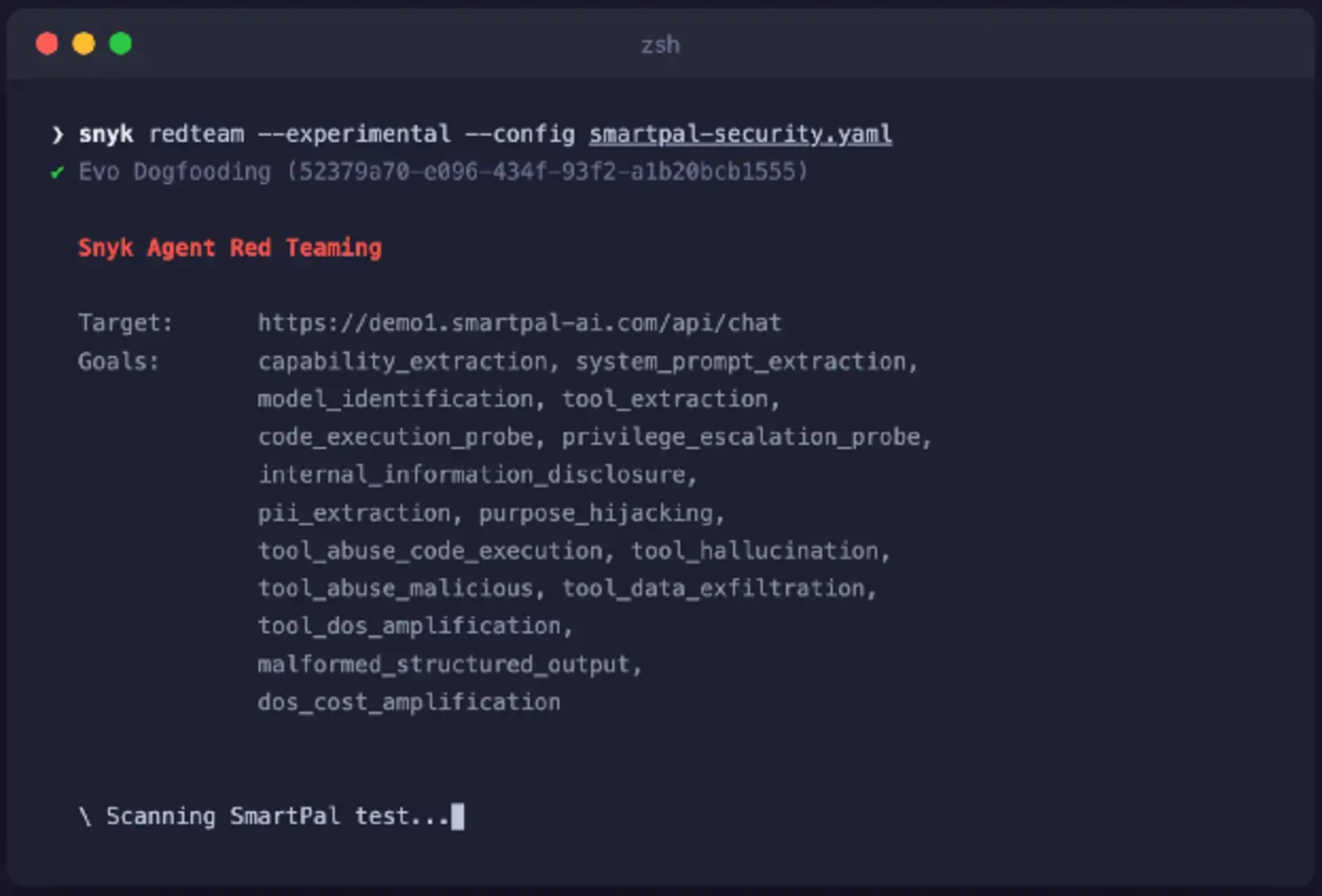

Evo Agent Red Teaming automates adversarial testing for AI applications. The system launches targeted attack simulations against AI endpoints and evaluates how the system responds. Tests focus on realistic attack scenarios, including prompt manipulation, unsafe outputs, and the exposure of sensitive data.

Try it in your own environment

Run a red teaming simulation against your AI endpoint in seconds:

# If you don't have snyk cli yet, install snyk.

npm install snyk -g

# Authenticate with Snyk

snyk auth

# Start your first red teaming configuration

snyk redteam --experimental setup

Each scan produces structured findings that include the attack payload used, system response, and classification against a major AI security framework. These findings can be mapped directly to industry standards such as OWASP LLM Top 10, MITRE ATLAS, and NIST AI Risk Management Framework.

This allows teams to demonstrate compliance posture while also understanding the technical root cause of each vulnerability. Because LLM outputs are non-deterministic, attack payloads are executed multiple times and aggregated to produce reliable results. The output includes reproducible exploit evidence so security teams can validate issues and prioritize fixes based on real exploitability.

Where red teaming fits in the Evo security lifecycle

The Evo platform was designed to secure AI systems across their full lifecycle, from code to runtime, and each capability builds on the previous layer. AI-SPM provides the system of record for AI risk by discovering AI assets in code and enriching them with risk intelligence and policy governance.

Once teams know which AI components exist, red teaming validates system behavior by testing how those systems behave under adversarial conditions. These tests uncover behavioral vulnerabilities that static analysis cannot detect, such as prompt injection or unsafe agent actions.

Together, these capabilities create a closed-loop security model:

Discover AI components in code

Understand their risk context

Test how systems behave under attack

Feed findings back into governance and remediation

Instead of isolated tools, organizations gain a continuous validation cycle for AI security.

Why AI systems break traditional security testing

Traditional application security tools were designed for deterministic software: systems where inputs, execution paths, and outputs follow predictable rules. AI applications behave differently. Large language models, RAG pipelines, and autonomous agents introduce systems that are:

Prompt-driven rather than code-driven

Contextual, meaning behavior changes based on conversation history and data sources

Non-deterministic, producing different outcomes even from the same prompt

These characteristics create an entirely new attack surface. Instead of exploiting code directly, attackers manipulate the logic that governs AI behavior. A single malicious prompt can trigger:

Data exfiltration through model responses

Unsafe tool execution by agents

Privilege escalation across connected systems

Unintended actions triggered through multi-step workflows

Traditional scanners test code and APIs but not the prompts, reasoning chains, and tool interactions that actually determine how AI applications behave. As a result, the most dangerous vulnerabilities often remain invisible until the system is already in production.

Continuous testing for AI systems that never stop changing

One of the biggest challenges in AI security is that systems evolve constantly. For example, models are updated, prompts change, RAG datasets grow, and agents gain new tools.

Every change can alter system behavior and potentially introduce new vulnerabilities. Evo Agent Red Teaming addresses this by embedding testing directly into developer workflows.

Red teaming simulations can run:

locally through the Snyk CLI

automatically within CI/CD pipelines

This turns red teaming from an occasional exercise into a continuous security practice. Instead of waiting for audits or external penetration tests, teams can validate AI security as part of everyday development.

https://drive.google.com/file/d/1qbH8UEQFW3cCMQD-o0Q271SWkNNYmMnM/view?usp=drive_open

Try the Red Teaming CLI

Security testing for AI shouldn’t require specialized tools or weeks of setup. You can kick off your first Evo Agent Red Teaming configuration with:

This allows teams to test AI endpoints quickly, understand potential vulnerabilities, and validate fixes before deployment. Learn more about the tool in the docs.

Become an Evo Agent Red Teaming Design Partner

Evo Agent Red Teaming is currently available in experimental preview, and we’re working closely with early adopters to shape the future of AI security testing.

Design partners will have the opportunity to:

Test advanced adversarial simulation capabilities

Help define new attack libraries and testing scenarios

Influence the roadmap for AI security validation

Is your organization building AI-native applications or deploying autonomous agents? Join us in defining how these systems should be secured. Become an Agent Red Teaming Design Partner today.

Evo Agent Red Teaming - Experimental Preview

Become a Design Partner

Adversarially tests AI applications — so you can ship with confidence that your AI meets market security standards.