AI Is Building Your Attack Surface. Are You Testing It?

March 19, 2026

0 mins readThe market is flooded with claims. One vendor tops a leaderboard. Another raises nine figures on a pitch deck. Meanwhile, your developers shipped three AI-generated services before lunch. Here's the conversation the industry isn't having, and the one we've been building toward for years.

There's a version of this conversation happening inside every Security team right now.

Someone demos an AI coding assistant. The speed is undeniable and the team is in awe. Still cautious, sometimes skeptical.

Code that used to take a sprint takes an afternoon. Leadership is extremely excited. The developers that will survive this era are, too. And security, if it's honest, is quietly unsure whether the tools they have are built for what's coming.

Narrator: They're not.

The benchmark nobody wants to talk about

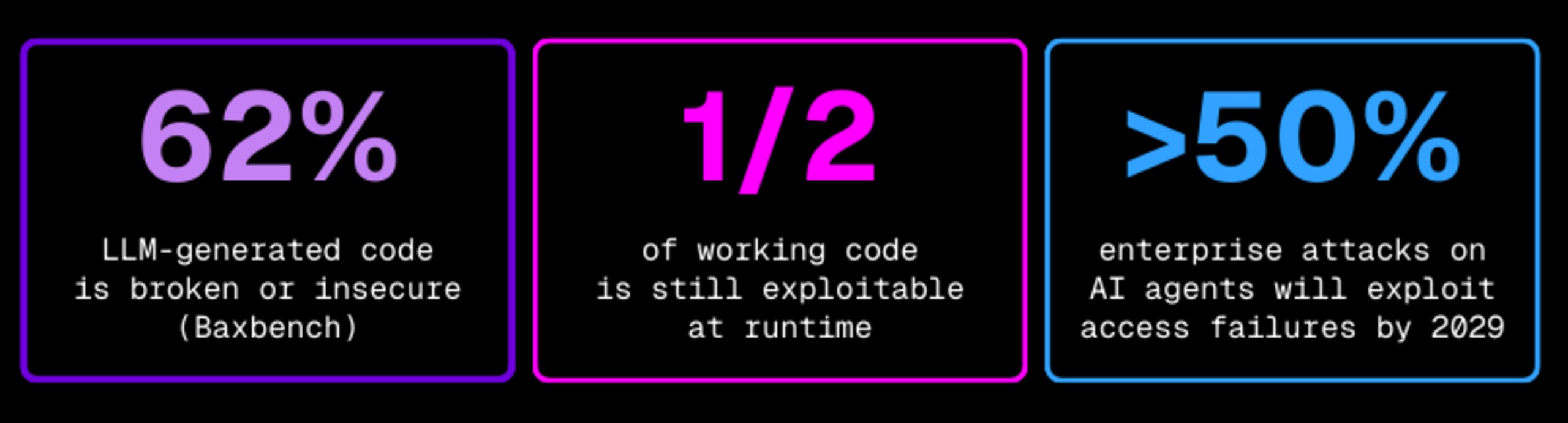

BaxBench isn't a threat report; it's a measurement. When researchers evaluated LLM-generated backend code across real-world tasks, 62% of it was either broken or insecure. Of the code that worked correctly, roughly half was still exploitable.

Read that again: AI writes working code, and half of it is still exploitable.

That's not a reason to stop using AI coding assistants; that ship has long sailed. It's a reason to be precise about what secure means in a world where the code generation rate has been permanently sped up.

Static analysis at the point of creation, whether it's a SAST tool in the IDE, or AI-assisted security guidance in the development loop, is a necessary first step, but not a finish line. It can tell you what exists in the code, but it can't tell you whether that code is actually exploitable in the way a malicious actor would exploit it. Even with the latest multimodal static approaches that combine taint analysis and LLM reasoning, these tools can only predict what may be exploitable in the code, they cannot prove actual runtime behavior under real attack conditions.

For that, you need to probe, from the outside, at runtime: the way a real attacker would.

The second attack surface nobody mapped

While the industry was debating AI code security, a second attack surface was quietly taking shape.

AI agents don't operate in isolation. They call APIs, and often privileged ones, also often undocumented. APIs that were built before anyone expected them to be invoked autonomously and at scale. Gartner projects that by 2028, AI agents will become the primary consumers of enterprise APIs. By 2029, over 50% of successful attacks on AI agents are expected to exploit access control failures (BOLA/IDOR), exactly the class of flaws that static analysis has historically struggled to validate.

This is not a bold prediction; it's a description of architecture decisions your organizations already made.

BodySnatcher made it concrete. CVE-2025-12420, an unauthenticated attacker, with nothing but a target's email address, could impersonate a ServiceNow administrator, bypass MFA and SSO, execute AI agent workflows, and create backdoor accounts with full privileges. Social Security numbers, financial records, confidential IP: all of it ripe for the picking... All of it exposed.

The technique wasn't esoteric: there was no zero-day, no clever prompt injection. The vulnerability was sitting untested behind an agent that an organization trusted completely. The researcher's conclusion was precise: "AI agents significantly amplify the impact of traditional security flaws."

Speed of delivery has outpaced validation. The response can't be to slow down. It has to be to fundamentally change what testing actually means.

These are not new flaws; they're the same old flaws that were already traditionally hard to ascertain, just amplified by the new architecture layered on top of them.

Why this is actually one story

Here's what I think gets missed when these two problems are treated separately: they all share the same root cause. The speed of delivery has simply completely outpaced validation. AI coding assistants generate code faster than any security process was ever designed to handle, and AI agents invoke APIs faster than any inventory was designed to track. In both cases, something is running in production that has never been systematically attacked: not reviewed, not scanned, but *attacked*, the way a real malicious actor would.

Here's where we think that the response just can't be to slow down. It has to be to change what testing actually means.

Dynamic testing isn't a mere legacy artifact from the olden days of the pre-AI world; it's the discipline that the AI era has been waiting to actually need. In a post-AI world, it becomes a foundation, a load-bearing foundation.

So, the question doesn't become whether you should test dynamically, but rather whether your dynamic testing is intelligent enough and can even keep up with what's now running in production: AI-generated code, at AI-generated speed, behind APIs that agents are invoking faster than any human inventory can track.

Modern dynamic testing has to be AI-powered itself, fast enough for the pipeline, smart enough to distinguish real exploitability from noise, and purpose-built for an attack surface that didn't exist just two years ago.

Signal, not noise

Here's the real problem with AI-generated code at scale: it doesn't just create more code, it also creates more findings, more alerts, and more things that look like they need attention. Imagine all of that running through security tools that were designed for a much slower world.

Developers get swarmed with this noise and make a judgment call about where they need to spend their time, and most of the time it ends up being based on gut, seniority, or the ever-looming pressure of deadlines.

Now, alert fatigue isn't new, but in the AI coding era, it compounds: developers aren't reviewing one sprint's worth of findings anymore; they're reviewing output that would have taken a team an entire quarter to write, instead. If every finding gets equal weight, nothing gets fixed. Not with confidence.

The equation starts changing when you introduce correlation. When a static finding and a dynamic finding correlate to the exact same root cause (when the code says it’s vulnerable and runtime testing proves it is exploitable), you no longer have two issues to triage. You have one high-confidence fix that developers actually trust.

The noise subsides. The signal sharpens.

That precision matters more in the AI era than it ever did before. A 0.08% false positive rate isn't a product spec, it's what it looks like when security stops being a tax on developers and starts being an accelerant. AI writes the code, you prove what's actually broken, and developers ship faster with confidence, instead of slower with anxiety.

✔️ LLM-powered SAST identifying AI-generated code patterns and associated risk, in the IDE, in the pipeline, today.

✔️ Intelligent DAST that actively probes running applications and APIs to confirm real exploitability, especially BOLA, IDOR, and agent-specific authorization flaws.

✔️ Correlated findings that map static signals to dynamic confirmation: one prioritized fix, not a triage queue.

✔️ API discovery that surfaces the endpoints your AI agents are calling autonomously, including undocumented ones.

✔️ Continuous coverage embedded in the delivery pipeline, not a quarterly engagement.

What AI pentesting actually is (or should be)

Now, a lot of vendors are reaching for the "AI Pentesting" label right now. Some of them just mean that they're using LLMs to craft attack payloads, others mean that they're generating finding reports with "agents" (we question the concept of agency here, too). Only a few mean something way more interesting.

What the discipline actually demands, what the AI era specifically demands, is an approach that can test AI-generated code for AI-specific logic flaws, track the APIs that Agents are calling (including the undocumented ones), correlate dynamic findings with code-level context and intelligence to filter signal from noise, and all of this continuously rather than as a point-in-time, disjointed exercise.

This, by the way, should not be a feature list or a roadmap: it should be the standard. And it's one the industry hasn't been able to articulate well yet. Which is precisely why this is the moment to do it. So, do it we shall.

We’ve been building toward this standard for years, and the results we’re seeing with customers in production continue to validate the approach.

True AI-era security testing doesn’t just find more issues faster. It correlates static predictions with dynamic proof and delivers fixes developers can ship with confidence, not anxiety.

The conversation about AI security has been dominated by two supposedly disparate questions: can we secure AI models? And can we use AI to find vulnerabilities? Both of them legitimate, but neither standing by itself is sufficient.

The more urgent question is simpler: who is testing/attacking the code and APIs that AI is building and running?

If the answer isn't you, you can be sure it'll be someone (or someTHING) else.

Sign-up for Snyk API & Web

Start using our dev-first DAST engine today

Automatically find and expose vulnerabilities at scale with Snyk's AI-driven DAST engine to shift left with automation and fix guidance that integrates seamlessly into your SDLC.