Kubernetes Security: Common Issues and Best Practices

Learn more about Kubernetes security issues in a cloud native security context

What is Kubernetes Security?

Kubernetes Security is defined as the actions, processes and principles that should be followed to ensure security in your Kubernetes deployments. This includes – but is not limited to – securing containers, configuring workloads correctly, Kubernetes network security, and securing your infrastructure.

Why is Kubernetes security important?

Kubernetes security is important due to the variety of threats facing clusters and pods, including:

Malicious actors

Malware running inside containers

Broken container images

Compromised or rogue users

Without proper controls, a malicious actor who breaches an application could attempt to take control of the host or the entire cluster.

Where does Kubernetes fit within the overall development process?

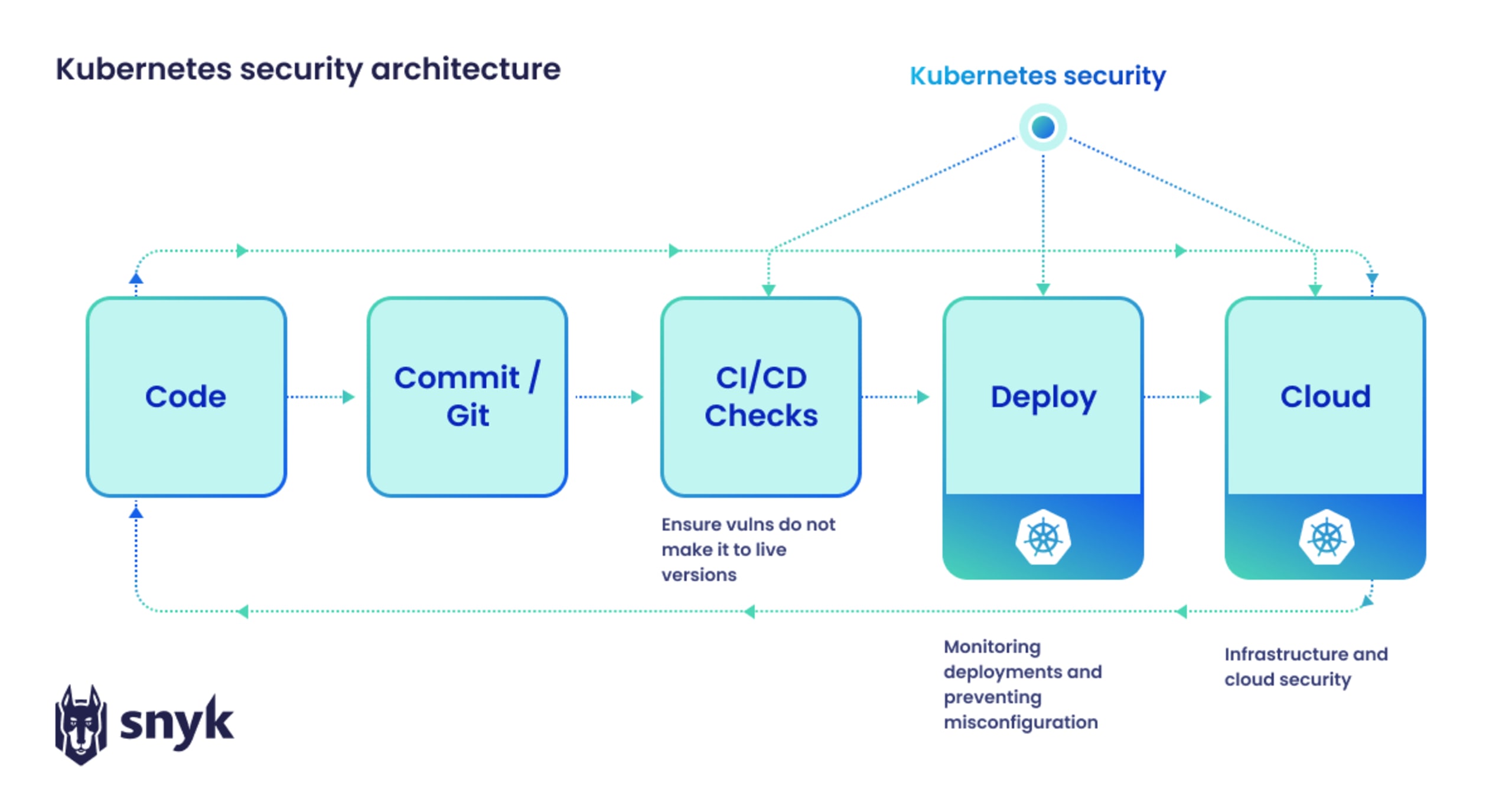

In the below diagram, we see where Kubernetes security fits into the overall software development life cycle (SDLC). Code is tested after the build stage to ensure that vulnerabilities do not make it to the live Kubernetes environment. Deployment and cloud infrastructure security then monitor running applications and deliver insights back to developers, creating a positive feedback loop from code to cloud, and back to code

Key Kubernetes security issues

While a number of Kubernetes security issues exist, the three most important to consider are:

Configuring Kubernetes security controls: When deploying Kubernetes yourself from open source, none of the security controls are configured out of the box. Figuring out how they work and how to securely configure them is entirely the operator’s responsibility.

Deploying workloads securely: Whether you are using a Kubernetes distribution with pre-configured security controls or building a cluster and its security yourself, developers and application teams who may not be familiar with the ins and outs of Kubernetes may struggle to properly secure their workloads.

Lack of built-in security: While Kubernetes offers access controls and features to help create a secure cluster, the default setup is not 100% secure. Organizations need to make the right changes to the workloads, cluster, networking, and infrastructure configurations to ensure Kubernetes clusters and containers are fully secure.

Kubernetes security challenges and solutions

While Kubernetes built-in security solutions do not cover all potential issues, there is no shortage of security solutions within the Kubernetes ecosystem.

Some challenging Kubernetes areas that will need extra security tools include:

Workload security: The majority of Kubernetes workloads are containers running on engines like containerd, cri-o, or Docker. The code and other packages in those containers must be free from vulnerabilities.

Workload configuration: Whether using Kubernetes manifests, Helm Charts, or templating tools, the configuration for deploying your applications in Kubernetes is typically done in code. This code affects the Kubernetes security controls that determine how a workload runs and what can or cannot happen in the event of a breach. For example, limiting each workload’s CPU, memory, and networking to the maximum expected use will help to contain any breaches to only the affected workload and ensure other services will not be compromised.

Cluster configuration: There are a number of Kubernetes security assessment tools available for your running clusters, such as Sysdig, Falco and Prometheus. Among other features, these tools use audit logs and other built-in Kubernetes metrics to check for adherence to Kubernetes security best practices, as well as CIS and other relevant security benchmarks.

Kubernetes networking: Securing the network plays a major role when it comes to Kubernetes. Pod communications, ingress, egress, service discovery, and — if needed — service meshes (e.g. Istio) should all be taken into account. Once a cluster has been breached, every service and machine in the network are at risk. It is therefore important to ensure your services and the communications between them are isolated to only what is required. This, combined with the use of cryptography to make your machines and services private, can also help contain the threat and prevent a major network-wide breach.

Infrastructure security: As a distributed application runs across many servers (using physical or virtual networking and storage), securing your Kubernetes infrastructure — particularly the master nodes, databases, and certificates — is crucial. If a malicious actor has successfully breached your infrastructure, they could gain access to everything needed to access your cluster and applications as well.

Each of these challenges can be addressed with security tools available in the Kubernetes ecosystem. The partnership between Snyk and Sysdig creates a tighter alignment between developers and SecOps by providing developer-first tooling for each aspect of Kubernetes security from container to cluster. Snyk provides developer-centric security tools that work throughout the SDLC, such as Snyk Container and Snyk Open Source. By combining those dev-first workflows with Sysdig, we’re able to feed insights from Sysdig’s runtime intelligence (which uses audit logs to monitor Kubernetes environments) to developers within their normal workflows. This partnership represents the first developer-first cloud security posture management platform available.

Developer-first container security

Snyk finds and automatically fixes vulnerabilities in container images and Kubernetes workloads.

Securing Kubernetes hosts

The cloud host represents the final layer of a Kubernetes environment. The major cloud providers offer management tools for Kubernetes resources, including Google Kubernetes Engine (GKE), Azure Kubernetes Service (AKS), and Amazon’s Elastic Kubernetes Service (EKS), but security still operates under a shared responsibility model.

There are several ways to secure Kubernetes hosts against vulnerabilities:

Secure the workload layer: Ensure container images are free of vulnerabilities and properly configured (e.g. disallow privileged containers).

Build boundaries between workloads and hosts: Restrict access to host resources by reducing container privileges and configuring the runtime environment to limit resource utilization in the event of a breach.

Monitor running clusters: Audit logs should be monitored to identify misconfigurations or suspicious behavior.

Kubernetes security context settings you should implement

One way to prevent pods and clusters from accessing the rest of the Kubernetes system is to use securityContexts. Here are ten major security context settings that every pod and container should use:

runAsNonRoot: Setting this to “true” prevents containers from running as root users.runAsUser/runAsGroup: These ensure containers use a specified runtime user and group.seLinuxOptions: This sets the SELinux context for the container or pod.seccompProfile: This allows you to set the seccomp profile on the Linux kernel to restrict what containers can do.privileged/allowPrivilegeEscalation: It is generally not recommended to allow privileged containers or to allow processes in them to elevate privileges, so these should be set to false.capabilities: This allows you to control access to kernel-level calls. Only provide the minimum required.readonlyRootFilesystem: Set this true when possible. This helps prevent attackers from installing software or changing configurations on the filesystem.procMount: The “Default” setting should be used except in specific situations such as nested containers.fsGroup/fsGroupChangePolicy: This setting should be used with caution because changing ownership of a volume usingfsGroupcan impact pod startup performance and have negative ramifications on shared file systems.sysctls: Modification of kernel parameters usingsysctlsshould be avoided unless you have very specific requirements, as this may destabilize the host system.

Kubernetes security observability

When it comes to K8s security, using secure configurations helps leverage Kubernetes’ security features. It’s also important to use effective monitoring processes to maintain visibility over Kubernetes resources. Here are a few tips for Kubernetes monitoring:

Use tags and labels: These define applications using metadata to simplify management.

Monitor the entire set of containers, not individual containers: Kubernetes manages pods, not containers, so it's important to monitor at the pod level.

Use service discovery: This helps monitor Kubernetes services in spite of their volatile nature.

Take advantage of alerts: Alerts can be set up based on what defines a healthy Kubernetes environment. Be aware of the problem of over-alerting since distributed systems can contain a lot of possible monitoring endpoints.

Inspect the control plane:The Kubernetes control plane behaves like an air traffic controller for workloads and clusters. Inspecting each component of the control plane helps ensure the efficient orchestration and scheduling of jobs.

Kubernetes security best practices by phase

When it comes to Kubernetes security, here are some best practices for each phase:

Development/Design Phase

Some Kubernetes environments may be more secure than others. Using a multi-cluster architecture or multiple namespaces with proper RBAC controls can help isolate workloads.

Build Phase

Choose a minimal image from a vetted repository.

Use container scanning tools to uncover any vulnerabilities or misconfigurations in containers.

Deployment Phase

Images should be scanned and validated prior to deployment.

An admission controller can be used to automate this validation so only vetted container images are deployed.

Runtime Phase

The runtime environment represents the final layer of defense for Kubernetes resources.

The Kubernetes API generates audit logs that should be monitored using a runtime security tool, such as Sysdig.

Images and policy files should also be continuously scanned to prevent malware or misconfigurations in a runtime environment.

Using Snyk for Kubernetes security

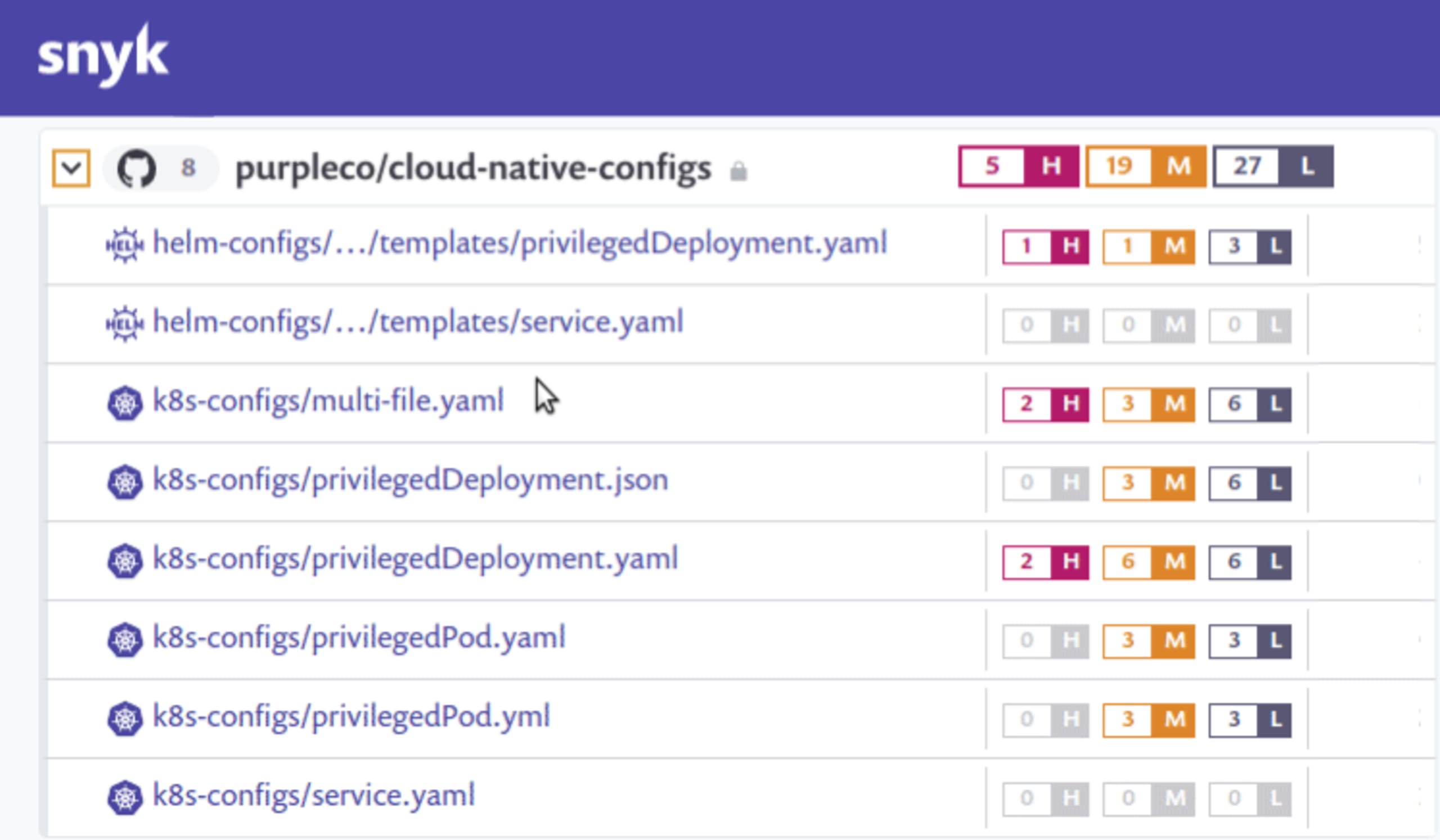

Snyk can help you improve and maintain your Kubernetes security posture with its developer-first security tools, including Snyk Container and Snyk IaC. Snyk automates the scanning of application code, container images, and Kubernetes configurations and delivers insights and recommendations to developers within their workflows.

“A product like Snyk helps us to identify areas of our services that are potentially exposed to threats from external actors,” Rizzo explained. ... "Now that Snyk is part of our CI/CD pipeline, security checks are always done earlier during development.”

Our partnership with Sysdig extends our security capabilities into the runtime environment.

Developer-first container security

Snyk finds and automatically fixes vulnerabilities in container images and Kubernetes workloads.

Kubernetes security FAQ

Is Kubernetes a security risk?

While Kubernetes offers security features and settings that can help, security is not built in out of the box. Operators need to configure these controls to ensure the containers and code running on the cluster are safe.

Given that developers increasingly write the configurations for the containers their application runs in, they need to consider Kubernetes security in their plans.

How do you protect Kubernetes Secrets?

Kubernetes Secrets are stored unencrypted by default, so the first step towards securing them is enabling encryption at rest. Role-based access controls should then be applied to limit read and write capabilities by users and to set privileges for modifying or creating new Secrets.

How do you secure containers in Kubernetes?

Kubernetes container security starts with selecting a trustworthy, minimal base image. Scanning tools should be used to uncover vulnerabilities or misconfigurations in the container before it deploys to production. Runtime monitoring tools can then maintain visibility over running containers using audit logs and other methods.

How do you maintain security in Kubernetes?

Kubernetes has built-in security but it requires proper configuration to work effectively. Security starts in the design phase with choosing a secure architecture and base container image. Scanning tools can then help monitor the build process and uncover any vulnerabilities or misconfigurations before the container goes to production. Finally, runtime monitoring tools help maintain visibility over running containers.